The $200 Problem with Claude Code

Claude Code is powerful because:

- It runs advanced models like Opus 4.5

- It scaffolds entire applications

- It handles tool calling and terminal workflows

- It manages dependencies automatically

- It builds test harnesses

But what you’re really paying for is cloud infrastructure

Your code prompts are processed on external hardware.

Your data leaves your system.

Your usage scales your bill.

For heavy dev workflows, that becomes a recurring $200/month dependency.

That’s not sustainable long term.

2026 Is the Year of Open-Source AI

Open-source models have matured dramatically.

Today you can run powerful coding models like:

- Llama 3

- GLM OCR

- GLM 4.7 Flash

- GPT OSS 20B

Locally. On your machine

And the experience is shockingly close to premium cloud AI.

How the Free Local Claude Code Setup Works

Here’s the architecture shift:

Traditional Setup

Claude Code → Anthropic Cloud → Opus 4.5 → You pay $200

Local Setup

Claude Code CLI → Ollama → Local LLM → Runs on Your Machine → $0

Instead of Anthropic’s backend, you plug Claude Code into your localhost model server.

No external calls.

No billing.

No token limits.

🛠 Step-by-Step: Build Your Free AI Coding Agent

Step 1: Install Ollama

Ollama lets you download and run open-source models locally https://ollama.com/download

It:

- Hosts models on localhost

- Runs them efficiently

- Keeps your data private

- Simplifies model management

After installation, you can open cmd prompt to check:

To see installed models.

Step 2: Download a Coding Model

For example on cmd promt type:

You now have a ~13GB model running locally

Yes, it requires RAM.

Yes, hardware matters.

But you own it.

Step 3: Install Claude Code CLI

Install Claude Code locally

Claude CLI runs via Node.

In CMD:

v18.x.x → good.In CMD (run as normal user):

claude --versionBy default, it uses Opus 4.5.

We’re going to override that.

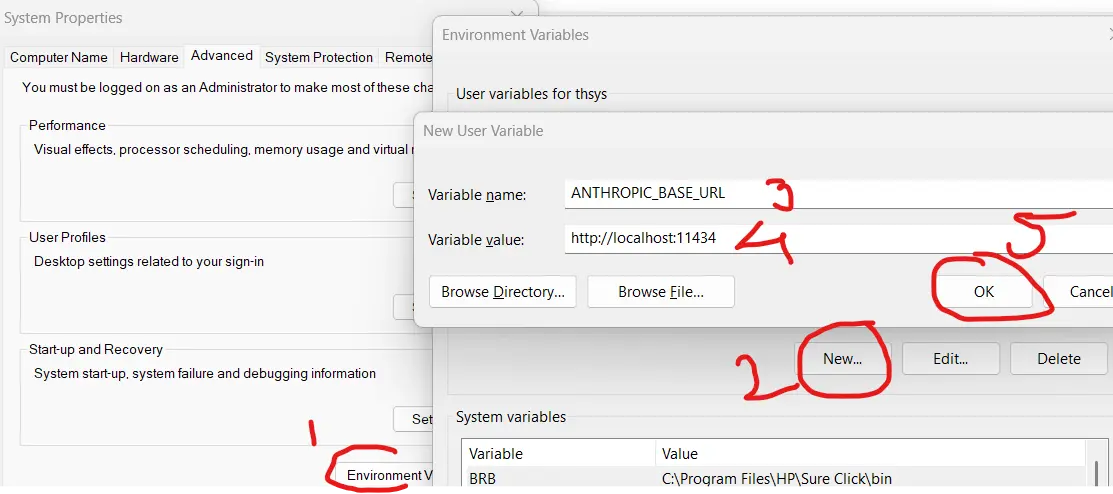

Step 4: Redirect Claude to Ollama

Set two environment variables:

1️⃣ Base URL

Point Claude to Ollama’s localhost server:

2️⃣ Dummy Token

Claude expects an API key. Give it anything:

It won’t be used.

Step 5: Switch Model

Tell Claude to use your local model:

Now Claude Code is powered by your local LLM.

Real Example: Create a Next.js App

Lets Use VS code with extension.

Step 1

Open VS Code

Step 2

Go to Extensions (Ctrl + Shift + X)

Step 3

Search:

You will find: : Continue - open-source AI code agent by author Continue.dev

Install the extension.

Prompt:

Create a hello world Next.js app

The local model will:

- Ask setup questions

- Generate package.json

- Create tsconfig

- Scaffold files

- Install dependencies

- Prepare dev server

Exactly like cloud Claude

Then:

Boom.

Localhost:3000 → Hello World Next.js App.

Zero cloud calls.

Is It as Smart as Opus 4.5?

Honest answer?

Probably not

But here’s the real insight:

For 80–90% of daily development tasks:

- CRUD apps

- API scaffolding

- React components

- Debugging

- Boilerplate generation

- Config generation

- Test writing

It’s more than sufficient.

And for complex reasoning?

You can still occasionally use cloud AI.

But your default workflow becomes free.

Major Advantages of Going Local

Zero API Costs

No $200/month subscription.

Full Data Privacy

No code leaving your system

Perfect for:

- Enterprise apps

- Government projects

- HealthTech

- FinTech

No Token Anxiety

No usage tracking.

Offline Capability

Works without internet.

Total Ownership

You control the stack.

The Only Real Tradeoff

You need decent hardware.

- 16GB RAM minimum

- 32GB ideal

- SSD required

- GPU optional but powerful

If your system is weak, choose smaller models.

Response time depends entirely on your machine

Strategic Insight for Tech Leaders

If you are:

- A CTO

- A DevOps head

- Running a 20+ developer team

- Managing AWS budgets

- Handling enterprise data

This is huge.

Instead of:

20 devs × $200/month = $4,000/month

You invest in:

- Better hardware

- Internal model servers

- On-prem AI infra

That’s a serious long-term cost advantage.

The Bigger Trend: AI Infrastructure Shift

We are entering a hybrid era:

- Cloud AI for heavy reasoning

- Local AI for daily coding

- Private AI for enterprise workflows

The sooner you understand local AI stacks,

the stronger your engineering advantage becomes.

2026 will belong to developers who:

- Own their infra

- Own their data

- Own their AI stack

Final Verdict

If you're paying $200/month for Claude Code, ask yourself:

Are you paying for intelligence?

Or are you paying for infrastructure?

Because now, you can build 90% of that experience:

✔ Locally

✔ Securely

✔ Privately

✔ For Free

And that changes everything.

Leave a comment

Your email address will not be published. Email is optional. Required fields are marked *